I have decided to release an early draft of this document so that others may provide feedback. Please let me know what you think.

Writing test cases for performance testing requires a different mindset to writing functional test cases. Fortunately it is not a difficult mental leap. This article should give you enough information to get you up and running.

First, lets set out some background and define some terms that are used in performance testing.

- Test case – a test case is the same as a use case or business process. Just as with a functional test case, it outlines test steps that should be performed, and the expected result for each step.

- Test script – a test script is a program created by a Performance tester that will perform all the steps in the test case.

- Virtual user – a virtual user generally runs a single test script. Virtual users do not run test scripts using the Graphical User Interface (like a functional test case that has been automated with tools like WinRunner, QuickTest, QARun or Rational Robot); they simulate a real user by sending the same network traffic as a real user would. A single workstation can run multiple virtual users.

- Scenario – a performance test scenario is a description of how a set of test scripts will be run. It outlines how many times an hour they will be run, how many users will run each test script, and when each test script will be run. The aim of a scenario is to simulate real world usage of a system.

Writing a test case for performance testing is basically writing a simple Requirements Specification for a piece of software (the test script). Just as with any specification, it should be unambiguous and as complete as possible.

Every test case will contain the steps to be performed with the application and the expected result for each step. As a performance tester will generally not know the business processes that they will be automating, a test case should provide more detail than may be included in a functional test case intended for a tester familiar with the application.

It is important that the test case describes a single path through the application. Adding conditional branches to handle varying application responses, such as error messages, will greatly increase script development time and the time taken to verify that the test script functions as expected. If a test script encounters an error that it does not expect, it will usually just stop. If the Project Manager decides that test scripts should handle errors the same way a real user would, then information should be included on how to reproduce each error condition, and additional scripting time should be included in the project plan.

The main reason a user may be presented with a different flow through the application is the input data that is used. Each test case will be executed with a large amount of input data. Defining data requirements is a critical part of planning for a performance test, and is the most common area to get wrong on a first attempt. It is very easy to forget that certain inputs will present the user with different options.

The other important data issues to identify are any data dependencies and any potential problems with concurrency. Is it important that data is used in some business functions before they are used in others? And, will data modified by virtual users cause other virtual users to fail when they try to use the same data? The test tool can partition the data used by each virtual user if these requirements can be identified. It can be difficult for a performance tester to debug test script failures with little knowledge of the application, especially if the failures only occur when multiple virtual users are running at once.

One of the most important pieces of information a performance test is designed to discover is the response time of the system under test – both at the overall business function level and at the low level of individual steps in the test case, such as the time it takes for a search to return a result set. Any test cases provided to a performance tester should clearly define the start and end points for any transaction timings that should be included in the test results.

It is important to remember that the test script is only creating the network traffic that would normally be generated by the application under test. This means that any operations that happen only on the client do not get simulated and therefore do not get included in any transaction timing points. A good example would be a client application that runs on a user’s PC, and communicates with a server. Starting the client application takes 10 seconds and logging in takes 5 seconds but, since only the login is sending network traffic to the server, the transaction timing point will only measure 5 seconds.

Operations that only happen on the client, including the time users take to enter data or spend looking at the screen is simulated with user think time – an intentional delay that is inserted into the test script. If no think time is included, virtual users will execute the steps of the test case as fast as they can, resulting in greater (and unrealistic) load on the system under test. Depending on the sophistication of the performance test tool, the user think time may be automatically excluded from the transaction timing points. Think times are generally inserted outside of any transaction timing points anyhow.

While a functional test case will be run once from start to finish, a performance test case will be run many times (iterated) by the same virtual user in a single scenario. Information on how the test steps will be iterated should be included in the test case. For example, if a test case involves a user logging in and performing a search, and the entire test case is iterated by the virtual user; then a test scenario may be generating too many logins if the real users generally stay logged into the application. A more realistic test case may have the virtual user log in once and then keep doing the same action for as long as the virtual user is run.

When a script is iterated, consideration should be given to the non-obvious details of how it is iterated. A good example would be a test script simulating users using an Internet search engine. When the test script is iterated, simulating a new search operation, should the virtual user establish a new network connection and empty their cache or should every iteration simulate the same user conducting another search?

As all performance test tools have different default behaviours, a good performance tester should clarify this type of detail with business and technical experts. Some performance test tools make these details easier to change than others. If it is not practical to emulate all the attributes of the expected system traffic with a particular tool, then it should be noted as a limitation of the performance test effort, and a technical expert should assess the likely impact.

Hopefully this article has provided some insight into the extra considerations that must be given when writing a performance test case, rather than a functional test case. As with any software specification, a performance test case may need to be refined as questions are raised by the performance tester.

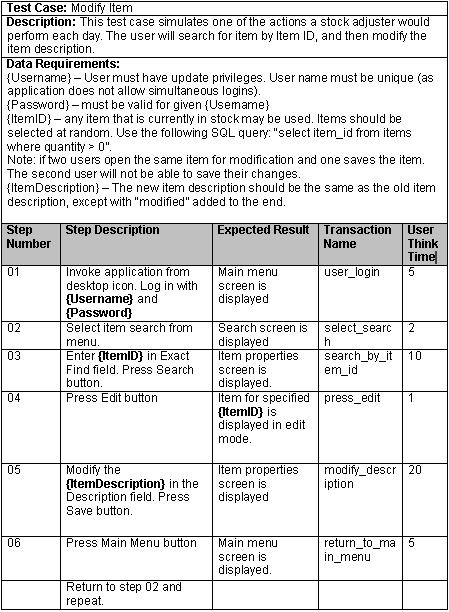

An example test case:

27 Comments

Comments are closed.

Dear Stuar,

Your performace test case topic is awsome, it does help me lots. However, I am wondering if there is a standard for software performance testing??? What I mean is some thing define how a high-quality software unit sould pass a performance test, for example, reaponse time must be

Shared Run-time Setting mode is disabled for what type of Vuser?

Well done, thanks. I do plenty of work in both WR and LR.

Chris

Hi Stuar,

Your explanation about writing test cases are very good. Can you please tell me how to write test cases for regression testing. As suppose there is an enhancement in the mid portion of the application and since we will be over with writing test cases how will we write the new test cases from the middle. Will it not be confusing. Please clarify this doubt.

Thank you

Hi Sutar,

Very interesting to go through the details. Well I have been in Testing for over 5 years but have never got an opportunity to do performance testing, but here comes 1 now.

I am wondering if you could help me here.

Please write in to me at ronak.nadkarni@yahoo.com & give me your contact information, i will like to discuss a few things with you.

This is kind of really urgent, hoping to talk to u soon.

Thanks,

Ronak

Your explanation is wonderful. But I want to know that if there is a scenario or scenario’s in which you have to run multiple scripts at one instance with the load distributed among the scripts, then how will you write the test case.

Thanks

Hi,

This artical helped me a lot .I have a question on formance test planning.Please give your contact details phone number or mail id.

My id is sree.bonthu@gmail.com

Your explanation is wonderful for writing test case. I would like to know that the created stress script for testing enviornment can be helpful for production environment??? If yes then how to replace the prod url with test url. I am working on the application where there are 3 environments and I have to create a script for each environment. Now, I am looking forward to use the single script for all 3 enviornments. It will be great if you can help me out for the same.

Thanks…

Your way of explaing about perfomance testing is very nice.

I want u to give more examples of other test cases like regresstion testing etc.

Hi,

Hi Stuar,

Your explanation about writing test cases are very good. Can you please tell me how to write test cases for DNS load testing. Please clarify .

Thank you

Your article is very interesting and informative. I find it useful to my current line of field. I was just wondering if you also could write some examples as well as articles on test case specifications against scenarios in the near future.

Good work, hoping to view more articles and examples from you…

Hi,

Your explanation is good.

But I would like to know more on how Performance testing is done on WEB Search engines & the standards required for the same.

I liked to ur way of presentation about the performance testin.

I want You to give a good example of regression testing and functionality testing.

Thanks for showing us how the performance test cases are written.

Can you send a test mail on my id as i have plenty of doubts related to Performance testing.

Thanks in Advance

Could you pls guide me for some more senarios about ECOM site with respect to performance testing.

Thanks

presentation is great and informative, as others i do request for some good performance test plan, especially using huge data’s, with drop down options etc.

hi

then explanation about the performance testing was wonderfull. i was wondering how to write a code for it as java developer and as well i wanna to knon what are test cases for perforamance testing for add,insert,update,delete,restore,index,key and enriched query

Hi I need some of the real time test cases on an existing application. Can you please provide me. I would be thankful if you do so.

Thanks.

if u have given the actual output it would be more clear…

Hi Stuart,

We are starting to setup Load / Performance testing for our company (e-commerce) . Can I use Google Analytics to calculate 1. How many visitors are accessing our system during peak hours to calculate (no of virutual users needed for our load test)?

Do you advise using google analytics data for our load testing? Will that give quality result. Is that considered as accepted standard.

Please advice.

Thanks

Vanitha

can someone help me with software testing tks

Very helpful. I’m currently studying Business Information Systems and I’m getting on really well at Systems Analysis and Design and Database Design. Do you think a career in performance testing might be suitable for me based on this?

Hi,

Can you give the sample test cases for load testing

(1.no of visitors the portal can handle

2. Number of logged in users performing various actions via web and via mobile. ie those who are using web navigation and API. )

Hi,

I am in a confusion whether to choose Performance Testing or not, since I doesn’t have any idea about Performance Testing From past 1.5yrs I am working on manual testing. Please give an Idea whether is it suitable to choose? Is It quite easy or so difficult and do u have any scripting for Performance Testing please contact me at this mail id keerthibnagesh@gmail.com

Please Reply soon its so urgent

hi can you give share sample test case for load testing

i am beginner

please suggest me