Since the release of Performance Center 11.0, the product has been based on the HP Application Lifecycle Management (HP ALM) platform (i.e. Quality Center). This architectural change means that functional testers and performance testers can share a combined testing platform – a single repository for requirements, test cases, and defects – giving full visibility across the functional, non-functional and performance testing areas.

It sounds great, but there are pros and cons to combining Performance Center and ALM/Quality Center…

Prior to version 11.0, Performance Center was completely separate from ALM/Quality Center. As companies upgraded from Performance Center 9.52 to version 11.0 or 11.5, they had to make an important decision: should they maintain separate instances, or combine them?

Some customers are still using Performance Center 9.52 and haven’t had to make the decision yet. Choosing which deployment pattern to use will have a big impact on the users of these tools, and should be carefully thought through.

QC/ALM users and Performance Center users have different needs

| Quality Center/ALM | Performance Center |

|---|---|

| Large number of users e.g. 500 accounts, 250 concurrent users |

Small number of users e.g. 20 accounts, 10 concurrent users |

| Downtime is unacceptable. Functional testers use the tool 24×7 (onshore/offshore testers), and an outage will affect a large number of users. A big outage (e.g. half a day for an upgrade) must be planned months in advance and rehearsed in a non-production instance. | Downtime can be negotiated, as there will only be a small number of users at any time, and performance testers are more easily able to move their test execution windows to accommodate downtime. |

| Upgrade cycle is quite slow. Feature set is stable and mature. Users would prefer to be using a 3-year old version of the product than risk minor changes. | Upgrade cycle is much faster. Support for new protocols is being added with each release. Many users want to use the current feature pack so they can test the latest version of SAPGUI or Silverlight, etc. |

The main problem I see is that performance testers desperately want to upgrade to the latest version, but the people who manage ALM don’t want to do it as the functional testers have no compelling reason to want an upgrade, and are highly resistant to any change that may cause downtime.

Many companies who own Performance Center are choosing to maintain two separate instances of ALM – one for performance testers, and one for functional testers.

Synchronizing data between Performance Center and ALM

The obvious solution to the problem of meeting the needs of both performance testers and functional testers, while still giving a unified view across both testing streams, is to have two separate instances, but synchronize the information between them. This option seems to have all of the benefits with none of the drawbacks.

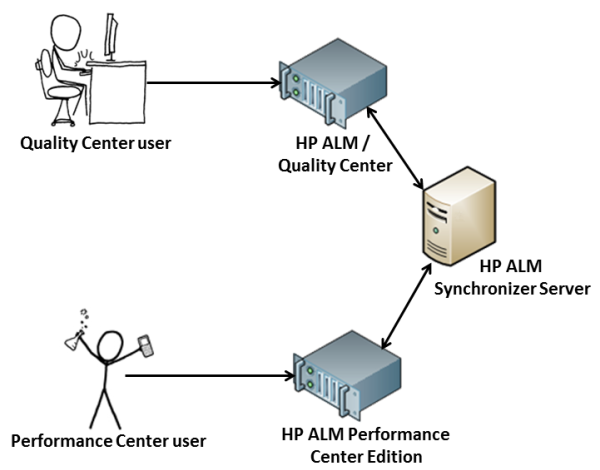

HP provides a tool called the ALM Synchronizer that sounds like it should help. In theory, it should work like this:

Unfortunately, the Synchronizer tool does not support Performance Center. Here is the relevant section from the HP ALM Synchronizer v1.40 Installation Guide:

Defects synchronization between two HP ALM 11.00 endpoints is not supported for HP Quality Center Starter Edition, HP Quality Center Enterprise Edition, or HP ALM Performance Center Edition.

HP (or an HP partner) should urgently work to fix this, as a lot of Performance Center customers would benefit from the ability to synchronize data between HP ALM/Quality Center and Performance Center.

Update: in a comment below, Ray mentions that Tasktop Sync might be a solution for this problem.

It’s time to decide!

Lots of HP customers are still using Performance Center 9.52, so haven’t had to make the choice of whether to combine their instances or keep them separate. Time is running out for them to make their decision, as they will have to upgrade to an ALM-based version of Performance Center before the end of 2013.

[attachment url=”https://www.myloadtest.com/wp-content/uploads/migrated-resources/performance-center-9x-eol-customer-letter.pdf”]Performance Center 9.x End of Support customer letter[/attachment]The End of Support date for Performance Center 9.x is December 31, 2013. As of this date all customer support activities for this version will cease, this includes:

- Telephone support

- Security Rule updates

- Product upgrades

Performance Center 8.1x has reached end of support per June 30, 2008.

If you work at a company that owns Performance Center, and you have an opinion on the pros and cons of merging Performance Center and ALM/Quality Center, please share your experiences in the comment section below.

9 Comments

Comments are closed.

Betteridge’s law of headlines states that “Any headline which ends in a question mark can be answered by the word no.”

Hey Stuart, I have a clarification on Performance Center 11.52.

From Installation guide, i got to know that “Recording VuGen scripts is supported on hosts on 32-bit machines only”.

We have bought PC11.52 with Windows 2008 R2 Std 64 Bit SP1.

Does Vugen supports recording here? If not, do we have any patches from HP that supports this?

Please help me in understanding this.

Thanks!!!

We have a “worst of both worlds” situation at my company. We are stuck on PC 11.0 because they won’t upgrade ALM, and we’re not even using any of the features that made the them decide that they should be combined. Performance tests are in separate projects to the functional tests. There are only 2 big projects that contain all the scripts and scenarios for all the performance testers in the whole company. Requirements aren’t linked to test cases, which aren’t linked to defects. It’s a poorly thought-out mess that has all the drawbacks you mentioned, and none of the advantages.

I totally agree. And just because it is a unified platform doesn’t mean that the same person should be managing both parts. Different skills are required to manage Performance Center effectively.

Also, something that should be added to the table in the post is that the deployment needs of ALM/QC and PC are different. ALM just needs the user interface to be accessible from everywhere in the company, but Performance Center must be able to generate load (and monitor) servers in all areas of the network. This is much more complicated, and makes the network location you deploy it really important.

Also, functional tests usually have clear pass/fail criteria and should be linked back to a requirement. Performance tests are much more fuzzy, so this is difficult.

Nice article anyway.

You make several good points and from my viewpoint I think there are plus/minuses to be added up.

Some of my thoughts

My 2c

I think that this could be partly an issue around framing – influencing how people think about an issue/concept/software tool by the language you use. Here are some examples:

Language is important. Is “unification” always a good thing? Should everything be unified? If I was designing a new house should I be “unifying” the dining area and the bathroom? (sounds efficient!)

Sometimes the most important factor in getting a decision accepted is how you describe the solution to the decision maker.

When you talk about framing an idea so that it sounds great to the decision makers, you are actually cynically exploiting a management anti-pattern that is common in large corporates.

It is bad when the decision maker is not the same person who will be using (or administering) the new system. This is how big companies get lumbered with crap software that no one likes using.

I am assuming that there is value(Throughput) when Quality of Service Engineers (QA + Performance + IT + Business + Customers + Customers Customers) can quantify that the investment into ‘Quality’ adds value to the product?

In my experience qualifying this value requires a synchronization towards a goal.

As a 13 year Loadrunner and 10 year QTP engineer (on both pre-production and production) I have seen a focus on reducing Operating Expenses (use freeware for example) , get it into production, we will let the Customer QA it.

Quality throughout the life-cycle of the product doesn’t magically get injected by any tool(not yet) , and maybe no testing is the best way to produce products?

Maybe we blurring the lines between Implementation and the Interface ?

Are there products out there of high quality that we can agree on, and work the problem backwards?

Cheers/andrew

Kelly’s Law (borrowed from Wikipedia):

“K∝1/A (Knowledge is inversely proportional to apparent authority.)

As an individual rises in a hierarchy, be it business, government or any human institution, the person tends to gain more and more power to do things, but less and less knowledge of what to do, or in extreme cases of the need to do anything.

As a result those at the top monopolize power, but have no idea of how to use it; those near the bottom know that things are going wrong, and often have a good idea of what needs to be done, but have no power to do it.”