One of the most poorly understood VuGen Runtime Settings is the one that allows a performance tester to treat “non-critical resource errors as warnings”. The majority of LoadRunner users I have spoken to do not realise that enabling this setting can hide serious load-related defects in the system under test.

LoadRunner automatically detects HTTP 4xx or 5xx responses but, if this runtime setting is enabled, LoadRunner will ignore the HTTP 4xx or 5xx error code when requesting a resource (i.e. the current transaction will not fail).

What are “non-critical” resources?

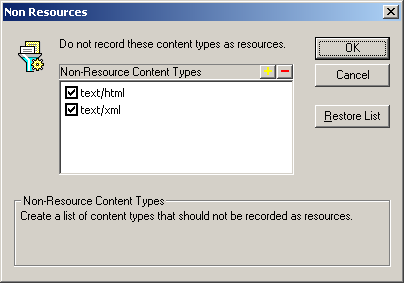

The wording of this runtime setting is a bit misleading. There is no such thing as a “non-critical resource”, just resources, and non-resources. Examples of content types that are classified as resources by default are: images (PNG, GIF, JPEG), stylesheets (CSS), JavaScript, JSON and (sometimes) XML. Whether an HTTP request is for a resource or a non-resource is determined during the script generation step, immediately after recording.

The VuGen recording settings identify non-resources by their MIME type. By default, only text/html and text/xml are identified as non-resources. This omits some rather important MIME types that are commonly used for dynamic content, such as application/xml and application/json.

When the source code of the VuGen script is generated, requests for non-resources are identified by a web_url or web_custom_request function with a “Resource=0” argument (the web_submit_data, and web_submit_form functions are always requests for non-resources, so they don’t need this function argument).

web_url("portal",

"URL=http://www.example.com/portal",

"Resource=0", // <-- look! It's *not* a resource

"RecContentType=text/html",

"Referer=",

"Snapshot=t1.inf",

"Mode=HTML", // <-- HTML mode means that the HTML source is parsed by the replay engine, and any "resources" that are found are automatically downloaded

EXTRARES,

"Url=/static/splash-screen.png", ENDITEM, // <-- an EXTRA RES(OURCE) is a "resource" that was downloaded during recording, but was not found by the HTML parser

"Url=/static/background.png", ENDITEM,

"Url=/static/form-validation.js", ENDITEM,

LAST);

web_url("login-form.js", // If the recording engine cannot find a parent request for a resource it saw during recording, it will generate a separate web_url statement with "Resource=1"

"URL=http://www.example.com/static/login-form.js",

"Resource=1", // <-- note function argument making this a "resource"

"RecContentType=application/javascript",

"Referer=http://www.example.com",

"Snapshot=t2.inf",

LAST);

During replay, LoadRunner uses the "Resource=" function argument to determine if a request if for a resource or a non-resource.

"Non-critical resources" are actually really important

VuGen has no way of determining what content types are dynamically generated on the server, except by looking at the MIME type returned during recording. Sometimes dynamically generated content is treated as a "non-critical resource", causing the performance tester to accidentally ignore critical errors.

My favourite example of someone getting themselves into difficulties is from the testing of a new HR self-service portal. Users could log on and view their payslips in PDF format. Each PDF was dynamically generated the first time it was viewed, and cached afterwards. During the load test the requests for the PDFs were returning an HTTP 500 error, but the performance tester didn't notice, as it was an EXTRARES in the script, and he had "non-critical resource errors as warnings" enabled. The only reason anyone found out about this problem was because the server admins were wondering why there were so many exceptions in their app server logs after the test. If your script downloads a PDF, please make sure that you check the PDF content to verify that everything is working correctly.

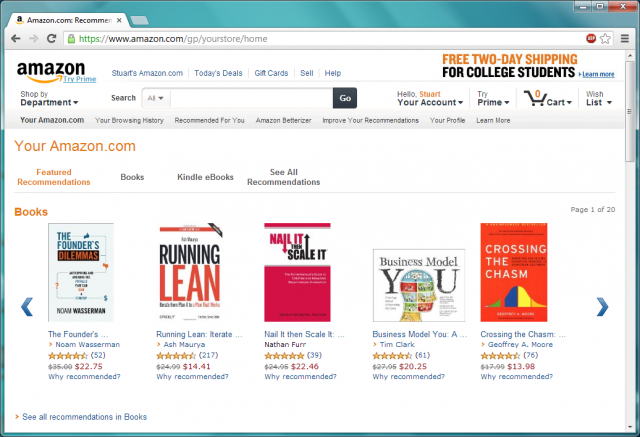

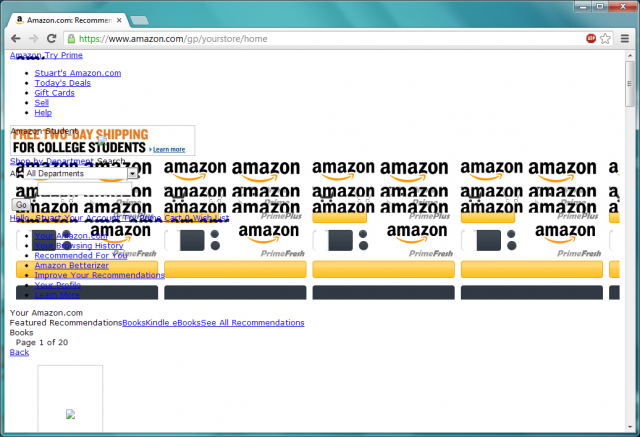

Even if you have successfully identified all the dynamic content types, and LoadRunner is treating them as non-resources, it is still important that all of the static content is downloaded successfully. By way of example, here is a screenshot of Amazon.com:

Here is the same page with most of the resources removed. A LoadRunner script with the "non-critical resource errors as warnings" runtime setting enabled does not detect this problem, and gives the transaction a status of "pass". If real users were seeing a website that looked like this when the system was under load, it would be a very high-severity defect, not something that should be ignored.

I can imagine that the Business owners of the new IT system would be very unhappy if their users were seeing a "broken" web page like this when their system was under load, and their performance tester hadn't told them about it.

How resource errors can occur under load

The main reason for load testing is that an application can behave very differently under load to how it behaves when there is only a small number of users. It might run slowly, or requests that normally work fine might suddenly start returning errors. Here is a great example from an ASP.NET forum:

I have an ASP.NET application that uses both an HTTPModule and several HTTPHandlers. Under somewhat heavy load (~25 req/sec) the application begins to return HTTP 404 errors for requests of a specific handler.

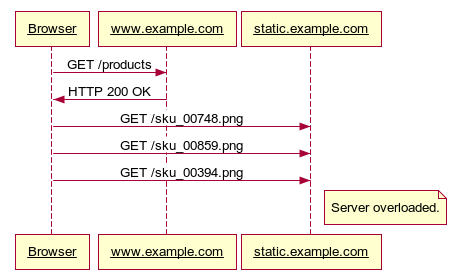

Even purely static content can behave differently when the system is under load. The sequence diagram below shows a product page being loaded successfully from www.example.com, but the product images are not loaded successfully, as they are hosted on another server which is overloaded. Perhaps LoadRunner gets a TCP-related error due to a connection limit ("failed to connect to server" or "server has shut down the connection prematurely") or an HTTP error code is returned.

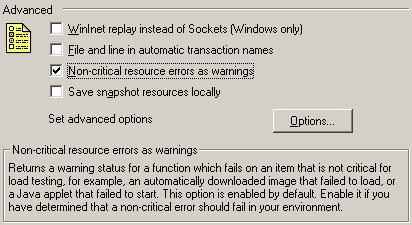

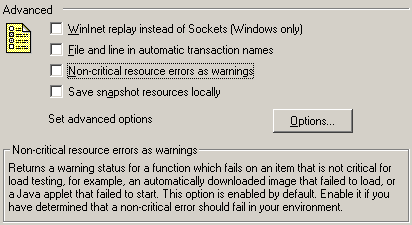

Recommended Runtime Settings

Web-based scripts should always disable (un-tick) the "Non-critical resource errors as warnings" checkbox in the script's runtime settings. This is the only way to be sure that this setting is not hiding errors during your test.

If your application seems to work fine manually, but your script fails during replay due to an HTTP 404 error for a resource, the correct work-around is to temporarily add a download filter for the URL that is failing, rather than hiding all errors of this type.

// Requests for this resource are consistently receiving an HTTP 404 response. This

// has been raised as defect #2096. When the defect is ready for re-testing, this

// filter can be removed.

web_add_auto_filter("Action=Exclude", "URL=https://www.example.com/resources/form-validation.js", LAST);

A bad default setting

If you read this far, I am sure that you are wondering why HP leaves the "non-critical errors as warnings" setting enabled by default. We all know that errors are bad, and that the aim of testing under load is to discover errors, right?

It is easy to forget what it was like when you were just starting as a performance tester. Spare a thought for a junior performance tester who is attempting to record their first VuGen script. If their new script fails for a confusing reason (like an invisible 1x1 pixel spacer GIF being missing), then they might become discouraged. There is an obvious trade-off between this, and the risk of hiding serious application errors that occur under load.

Personally, I really want to help performance testers write better scripts; this is why I have developed the VuGen Validator, which highlights common scripting mistakes like enabling the "non-critical errors as warnings" runtime setting.

12 Comments

Comments are closed.

I think your second-last paragraph is dancing around the fact that HP does not enable the setting by default because it would increase their support costs and decrease their sales (because newbs would think the tool does’t work!)

Just in case anyone is interested, the sequence diagram was generated using http://www.websequencediagrams.com. This is a great tool for creating simple UML sequence diagrams.

Browser->example.com: GET /products

example.com->Browser: HTTP 200 OK

Browser->static.example.com: GET /sku_00748.png

Browser->static.example.com: GET /sku_00859.png

Browser->static.example.com: GET /sku_00394.png

note right of static.example.com: Server overloaded.

Thanks for the sequence diagram website. Am always interested in trying out new tools as well as learning new concepts (or even brushing up on some of the concepts)

Good job explaning this, I have even seen testers enabling “continue on error” just to “fix” the errors haha.

Now what should be the Recommended settings for Non Resources?

Should we disable the text/html and text/xml before recording?

Hi,

In one of the aplication I work I need to include few filters and run the scripts. In this process I observed one 404 errors where the script is failing at this point. Now I need to use both exclude filter and include filter which loadrunner does not accept. So, do we have any custom code where we can use both.

Regards,

Raj

Generally treating non-critical resource errors as warnings is done to ensure script re-usability. imagine developer removing an image in new build and the LR script failing because of that.

In Vugen 11.51, I found an issue. When we enable “treat non-critical resources as warning”, Vugen treat a fake server url as warning as well. As a result, when a server is down, it does not even fail the script. This is a terrible setting.

Hi Rufeng,

I am facing same issue with LR12.The script is passing with the warning as “Warning -26627: HTTP Status-Code=404 (Not found)” even it is not customized with any correlations. Can you please provide me the solution how to resolve this issue?

An elements on one of your web pages is referenced in the HTML, but does not exist on the web server (causing the HTTP 404 response).

Eliminate the warning in your replay log by adding:

web_add_auto_filter("Action=Exclude", "URL=http://www.yoursite.com/images/your-missing-image.jpg", LAST);Now raise a low-severity defect. The web page should not be generating HTTP 404 errors under normal circumstances; and this should be very quick for them to fix.

HTTP 404 errors are bad (even when the page appears to render normally) because:

* they create a small but unnecessary delay for end users (an additional request round-trip to the web server)

* adds unnecessary noise to the web server logs, making it harder for an ops team to diagnose real problems

* they add a small but unnecessary amount of load to the server, and consume a small but unnecessary amount of additional bandwidth

Good luck with your testing!

Hi Stuart,

I do agree with most of the description stated under the article . it is great valuable information. But I sense one contradiction under the PDF paragraph. If we are trying to test an web portal which generates the Pay Slips then the moment you click on the link to generate the pay slip you will get a web_custom_request with link to PDF as main call. I hardly see a possibility of LR creating a call in which link to pdf will go to extras….

Also in my opinion whether we should enable the option or not will depend on the type of application we are testing. If the module we are testing is informative with the only intent is to show the description of production then we can certainly check this option or regenarte the script in url mode which will give us different custom_requests for each one of them embedded between web_concurrent_start and stop….

If the objective of the pages we are testing is to do a business transaction and the only images on the page is company logo then stopping the entire performance testing due to 404 of one image is not a good judgmenet as focus is mainly on in how much time the business transaction is going through instead of time taken by image to load first time as usualy the jpeg files add to response time only first time when they downloaded subsequently they are downloaded from cache. Hence returning that transaction as error and not completing load test will delay the Performance testing and may impact critical delivery dates ……

Hi, I am getting Application Log error while the script is executing. It is showing as “Application error has occurred .Please refer to the application log details”…What might be the problem for this?