Performance testers sometimes get hung up on tool-based testing. Admittedly, most of the really interesting problems appear when there are more than a few concurrent users on a multi-user system, but it is important to verify that an application performs acceptably when it is not under load too.

Even if response times seem fine during functional testing, the functional test team may not have thought to look for the effect of large datasets on response times. It’s not just a case of “do we need to put an index on a table somewhere”, but sometimes response times increase exponentially instead of linearly as the dataset increases.

This is especially noticeable with poorly written batch jobs. The job might take 1 minute to process 1,000 records, but it does not necessarily follow that processing 100,000 records will take 100 minutes.

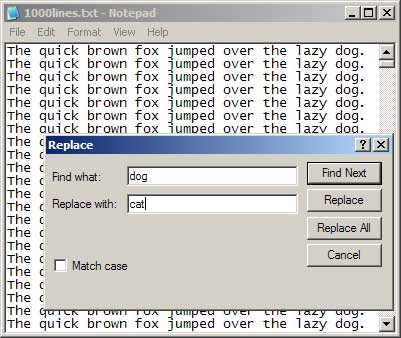

For an example of an application that seems to have implemented Shlemiel the painter’s algorithm, take the Replace function in Notepad. Performing a find-and-replace on a small file is very fast, but performance quickly degrades as the file gets larger.

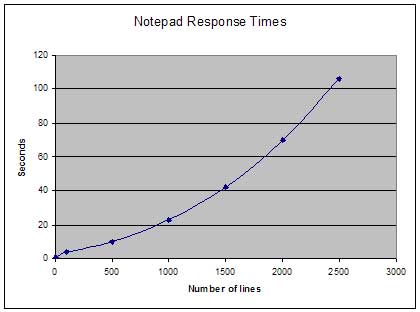

As you can see from the graph, the increase in response times is definitely not linear as the file size increases.

Using an even larger file containing 10,000 lines, the operation completes in 28 minutes. Compare this to the response times for another text editor such as TextPad – all files up to 10,000 lines were processed in less than 1 second.

If you would like to experiment with this feature, text files of different sizes are available here (155kB zipped).

4 Comments

Comments are closed.

I once worked with a guy from IBM who maintained that “Notepad is the best piece of software that Microsoft has ever writtenâ€.

When I pointed out all the features that were missing when compared to almost any other text editor, he stuck to his original opinion.

“Notepad doesn’t pretend to be all things to all people. Microsoft implemented a small number of features and they just work“.

I guess that is a valid enough point although, being a longtime Linux user, I think that he just liked the idea of Microsoft’s best software being a tiny, feature-poor tool that has been implemented better by everyone else that has ever tried.

So true … .

I totally agree. In my experiences, this is all-too-often the case. And I don’t think that it is necessarily tool-based testing, but also getting hung up on load testing, even if it is a low-tech, 20-people-in-a-room test. Performance testing and load testing are two very different things. Performance testing when a system is not under load is a critical test to conduct.

I should point out one thing to keep in mind. (Not saying you missed the mark. It wasn’t applicable to your test, so it just wasn’t covered.) It is possible for your system to improve performance as load increases, as cache-hit-ratios improve and modules stay loaded into memory. Again, performance testing under zero load is critical, as we would not want to impact that one off-hour user with 0% cache hit.

Hi,

How were you able to draw the graph while ‘Find and Replace’….Kindly reply to my id. Thanks aheand.